There have been a lot of changes in SEO over the past year alone, and we’re sure that 2021 has even more in store. However, there are pillars of SEO that remain as strong and significant as ever, such as backlinking, website speed, and quality content.

Advanced SEO might feel complicated, but it really all boils down to how much value Google thinks you provide to your users. Be creative, come up with unique approaches to problems, implement industry best practices, and use the right techniques to improve your SERP ranking this year.

Search engine optimization is an inescapable part of doing business online, unless of course, you plan on paying Google for every click for the rest of your life.

I’m going to assume that the readers of this have a baseline knowledge about SEO. Most people know the SEO drill – keywords, backlinking, business listings…lather, rinse, repeat. 🤔

If you’re wanting the basics before you dig into advanced SEO, then we cover keywords and keyword research, SEO tools for various SEO tasks, and some technical SEO in our Basics of SEO guide.

However, there’s another, deeper, more complex layer to advanced search engine optimization, and that’s what this advanced SEO guide is all about.

Utilize and apply these advanced SEO techniques to create a more effective SEO strategy for your client.

Search engine optimization practices are dynamic and ever-changing, and even as I write this, a broad core update is rolling out and affecting the SERPs. With this in mind, it’s no surprise that some guides can contain outdated information (although I’d say that large chunks of SEO remain relevant regardless of the year).

Let’s dig right in!

Search User Intent

Search engine algorithms have gone through a lot of changes over the last decade, but the goal has pretty much remained the same: provide their users with the best possible answers to their queries.

Over time, search engines have adapted and evolved to how people search. Search engines were successful in doing this because they understood that most searches can fall under four categories:

- Informational: The searcher wants to know more about a topic. These can be phrased as a question, such as “what is search engine optimization” or “best free SEO tools.”

- Navigational: The searcher wants to navigate to a particular site, such as The New York Times website, Yelp, etc.

- Transactional: The searcher is interested in transacting, ordering, purchasing, or buying. Transactional queries can be direct/explicit such as “buy women’s heels”, include local modifiers like “Jacksonville flower shop”.

- Commercial: The searcher is looking to buy something but is just shopping around. These are the types of search queries that have comparisons – S10 vs. Pixel 3 or Cheap International Phone Carriers, etc.

Knowing the four types of search intent will help you optimize for those searches and target the customers you want. I’ve spoken a little bit more on this in my own article on keyword research, so if you’d like to know how these types of search queries can be applied to your keyword research, give it a quick 5 min read.

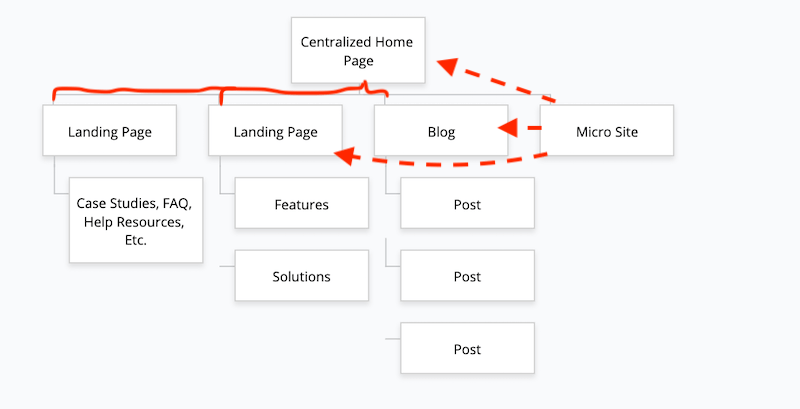

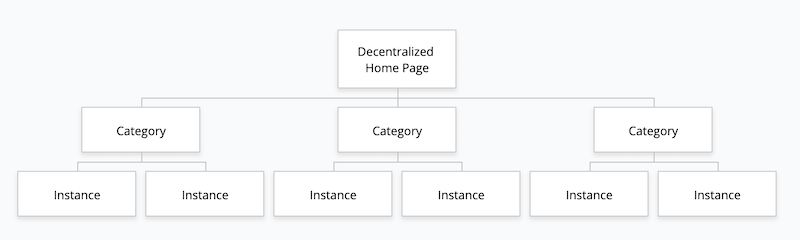

People always say “know searcher intent”, but too often, the value proposition of this practice is vague. The reason you want to know searcher intent for your queries is that it will feed into your entire marketing funnel. The calls to action can be customized and the internal linking structure can be optimized along with Page Rank and Chei Rank (Kevin Indig’s post on optimizing website architecture based on your website’s goals).

If you know the intent of the searcher, you can not only rank better, but you’ll convert better, and you’ll lead searchers down the correct path within your site.

Here are two examples of how you can distribute Page Rank and Chei Rank.

The 2nd example:

The 2nd example distributes page rank more equally (thus it doesn’t rely on a centralized model). To understand more, just read Kevin’s wonderful post.

Section 2: Google Ranking Factors and Google’s Algorithm

To really master SEO, you need to understand what the search engines look for, why, and how you can leverage that knowledge into something beneficial for your business. Once you know how the system works and what its rules are, you’ll not only be able to play the game…but win at it, too. 😈

My personal philosophy on SEO is that I should learn the practical maximum for tweaking signals to send the strongest signal without going too far and getting penalized. This is the real struggle for SEOs. Once you identify a ranking factor, you then need to identify just how far you can tweak that factor before it ruins user experience or before Google starts to penalize you.

Other SEOs may warn against this methodology, but ultimately, an SEO is paid to optimize for an algorithm, and that’s all I’m suggesting.

I know of one person that stands out amongst everyone else in the SEO industry as THE expert on dissecting Google’s algorithm. I’m sure many of you already know his name, but go follow Bill Slawski’s Blog if you’re looking for someone to search through hundreds of thousands of pages to distill relevant Google patent information on search engine ranking methodologies.

Outside of Slawsky, I really appreciate Ahref’s curated list of SEO blogs.

As a general rule of thumb, beginner level SEO can be learned from a variety of sources as it’s not too hard to nail down the basics. We’ve mentioned our SEO basics, but you can also check out people like Neil Patel and Brian Dean if you’re looking for a well-designed beginner level SEO walkthrough (though, these guys seem more like content marketing talking heads rather than sources of deeper SEO insights).

I also suggest identifying experts via social media and following them on twitter and following their blog (if they have one). To identify an expert, I’d stalk the walls of famous SEOs (like Rand Fishkin for example).

You can also start from my tiny list. You can also find SEO Agencies like mine, and reverse engineer what they do right.

Social media is the free option for getting good SEO technique insights, but you can also pay for someone to spill the beans on their SEO techniques or just buy an SEO course from reputable sources. Matt Diggity has a great course that I highly recommend. Other amazing courses exist as well, but I’m not here to promote person after person for their paid courses, so I’ll push onward.

Search engine optimization algorithms are based on mathematical algorithms. If you understand the ranking factors and apply advanced SEO techniques, you can use them to your own advantage. Google is smart, but ultimately, Google is just using math. Great content is…great, but Google can’t tell the difference between great and poor, so it uses math to get as close as possible.

The Flaws of the Algorithm

As smart as search engine algorithms are nowadays, it’s important to know that they still have the limitations that you need to work through.

They don’t “see” web pages the same way we do, and it’s this difference between human and machine that is the reason that SEO even exists in the first place—we do search engine optimization because it helps make our content readable to search engine bots.

One of the biggest weaknesses of current search engine algorithms is that they can’t understand non-text elements. Google can’t actually read pictures, illustrations, or videos unless they have alt text, meta information, or surrounding contextual information.

Another weakness is that the algorithm can be manipulated. Ideally, the highest quality content and the most relevant results would rank the highest, but that isn’t always the case.

Take the recent Google 30-day ‘Rank or Go Home’ SEO challenge held in the Facebook group, SEO Signals. Entrants competed to get the best ranking for the term “rhinoplasty Plano”. The first result was a minimalist site with relatively poor-quality content. The second result was a website entirely in Latin, except for a few strategically-placed keywords.

The Facebook Group ‘SEO Signals’ has a range of members ranging from SEO beginners to advanced SEO experts.

Of course, Google and other search engines are getting much better every day. There’s also the issue of impressing not just the algorithm, but the real people who make up your target market. The techniques we’ll be discussing in this guide are useful, long-term strategies for both search engines and human beings.

Real SEO Ranking Factors

Not one, not even the best SEO experts, can tell you with 100% certainty what the formula is for the perfect SEO strategy. First of all, Google alone is said to have OVER 200 different ranking factors.

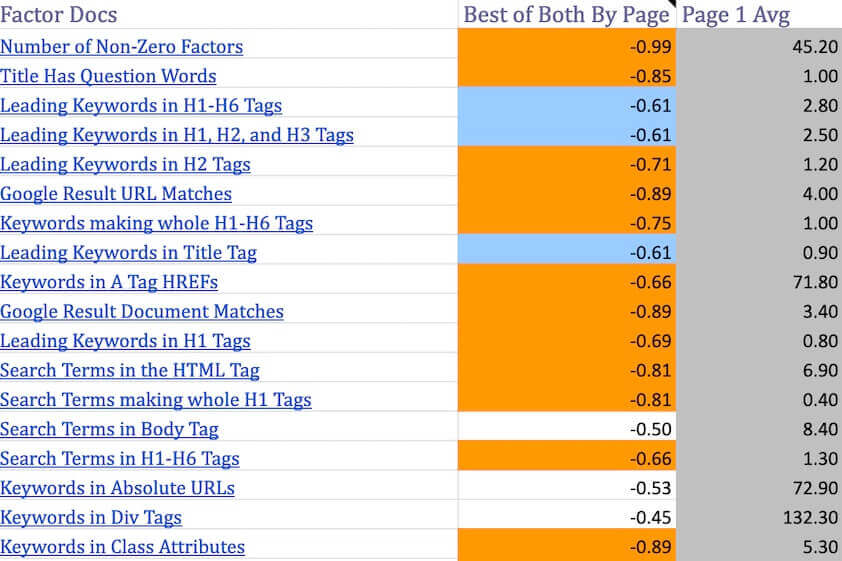

Benchmarking tools such as Cora or SurferSEO correlate ranking positions against a few hundred different SEO ranking factors to determine the exact ranking factors for any given keyword. Utilize these tools as part of your advanced SEO strategy to skyrocket your rankings.

Even if someone could list all of the factors, there’s no way to tell exactly how much any particular factor contributes to your SERP ranking. Plus, the list of factors (and how important they are) changes all the time!

The above image shows the 500+ ranking factors that Surfer SEO measures. The tool then allows you to visualize what everyone in the SERP is doing.

Despite all the rank factor changes, there are some factors that are proven to be important aspects of any SEO campaign. The exact impact may be speculation, but that doesn’t change the fact that these factors actually matter and are worth the effort. Here’s a great episode from some SEOs I trust and they cover this topic in massive detail.

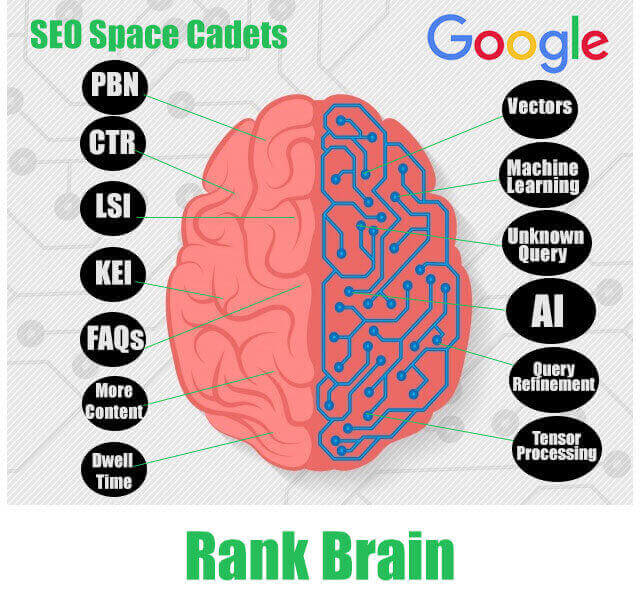

RankBrain

RankBrain is a relatively recent addition to the Google algorithm, but it’s already considered the third-most important ranking factor.

RankBrain is a sophisticated machine learning algorithm that learns from REAL user searches and behaviors. It influences rankings based on how much a user interacts with your site. Let’s illustrate with an example.

Say that you are searching for dog grooming tips. You notice that the fifth result looks interesting, so you click on it and spend several minutes reading through the article. Google will take note of the fact that result #5 is valuable, and will potentially boost its rankings.

This is a super simplistic view, but humor me as I’ll dig into the more advanced view a little further down.

The opposite is also true. If a lot of people ignore the first result—or even if they click it, but exit the tab right away because the information isn’t relevant or interesting—Google will most likely demote that result to a lower ranking. This is known as a ‘bounce rate’ and is a commonly debated SEO ranking factor. 📉

What you should learn from RankBrain is that providing high-quality content that users actually want to read will encourage them to spend more time on your site. This, in turn, will help improve your SERP rankings.

I’ll share with you my favorite graphic on Rank Brain. Hopefully, it helps.

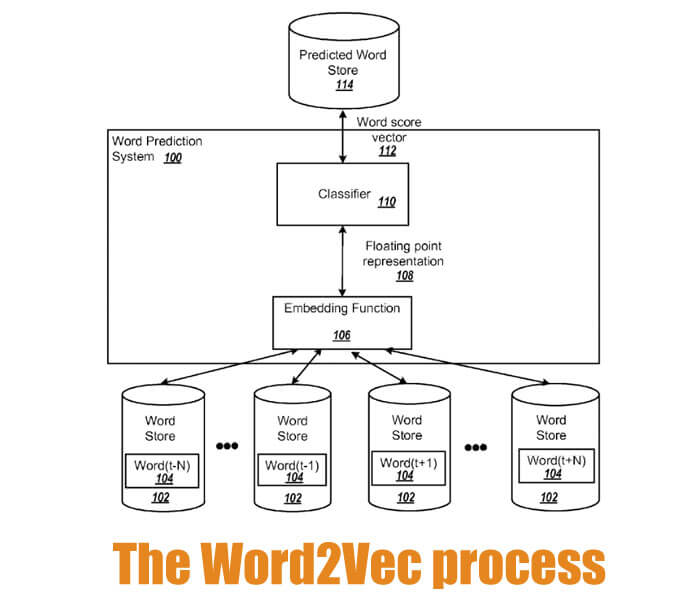

The shortest way to summarize the meat of RankBrain – RankBrain works by embedding words into vectors. Google doesn’t always understand words, but it can understand vectors (Bill Slawsky’s blog has additional content on patent information on word vectors). RankBrain needs to also recognize vector similarities and differences.

If you’re a big ole nerd, you should be thinking that RankBrain sounds familiar to something else in SEO past – Word2vec.

If you haven’t noticed the constant bouncing of website queries and rankings, then you’ve been asleep, but one of the reasons, (besides Rank Transition) is that Google is basically guessing through trial and error.

The problem with RankBrain is that its usage is so vague. SEOs don’t really know how to apply it to SEO techniques. We know that it is used for ambiguous searches and for never-before-seen searches.

If you have any real world examples of RankBrain changing the way SEO functions, drop a comment.

For now, suffice it to say, my suggestion is to continue optimizing for simple queries (“What is a Backlink?” for example), rather than trying to guess Google’s understanding of complex vector relationships.

Section 3: Indexation and Crawl Guide

These words get thrown out a lot in advanced SEO guides, but do you really know what they mean?

When search engines “crawl” your pages, they “read” the content to gain information about it. It helps search engines understand what exactly your website is all about.

Through crawling, a search engine can pick up the most important keywords. If it comes across a link, the bots will follow it to crawl that page as well.

Search engine bots ‘crawl’ your website and use a few hundred SEO ranking factors to rank your site against thousands of other sites.

Indexing, on the other hand, makes your website, pages, and content available to the viewing public. Unless your content is indexed, people will not be able to find it via the search engine results page, even if they type in all the right keywords.

People can still access your website by typing in the URL directly or clicking on a link on another page, but not showing up in the SERPs will significantly decrease your site’s traffic potential.

Many guides focus on the “How to Rank with SEO Marketing ” , but crawling and indexing is just as crucial, and ultimately, it will affect the “how you perform on the SERP. Stay tuned, we’ll teach you how to make it easier for search engines to crawl and index your content, which will, in turn, boost its rankings. 📈

Google has its own description on how it crawls websites, so feel free to read the webmaster description. I suggest reading the “long answer”

“Googlebot processes each of the pages it crawls in order to compile a massive index of all the words it sees and their location on each page. In addition, we process information included in key content tags and attributes, such as

<title>tags and alt attributes. Googlebot can process many, but not all, content types. For example, we cannot process the content of some rich media files.Somewhere between crawling and indexing, Google determines if a page is a duplicate or canonical of another page. If the page is considered a duplicate, it will be crawled much less frequently.

Note that Google doesn’t crawl pages with a noindex directive (header or tag). However, it must be able to see the directive; if the page his blocked by a robots.txt file, a login page, or other device, it is possible that the page might be indexed even if Google didn’t visit it!”

Improve your indexing

There are many techniques to improve Google’s ability to understand the content of your page:

- Prevent Google from crawling or finding pages that you want to hide using noindex. Do not “noindex” a page that is blocked by robots.txt; if you do so, the noindex won’t be seen and the page might still be indexed.

- Use structured data.

For those getting more advanced, you’ll need to understand the science of “crawl budgets”. Gary Illyes writes a wonderful description on this crawl budget blog post.

Essentially, he covers the factors that affect your crawl budget – Things like:

- Faceted navigation and session identifiers

- On-site duplicate content

- Soft error pages

- Hacked pages

- Infinite spaces and proxies

- Low quality and spam content

How to Get URLs Indexed

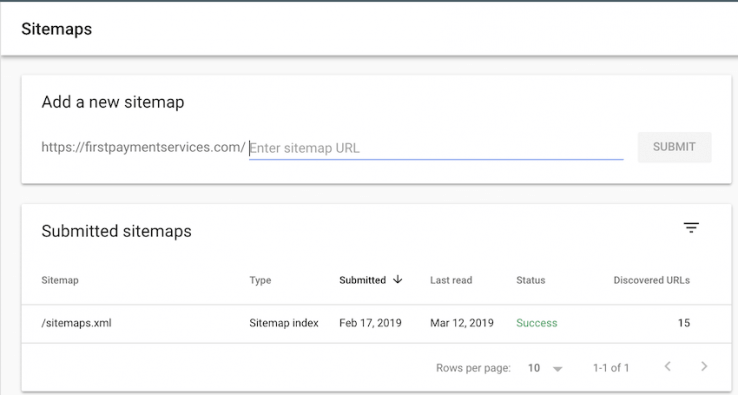

As we’ve mentioned, search engine bots crawl and index your content. However, this can take a while for some content, especially on low traffic sites. You can speed up the process by submitting your sitemap to the search engine for indexing.

A sitemap is a document that outlines or explains your site structure to both users and the search engine, to make it easier to navigate through it. Adding your sitemap to Google, the world’s biggest search engine, is easy enough.

- Upload your sitemap to a location on your website.

- Go to your Google Search Console dashboard.

- On the left sidebar, click on Sitemaps.

- Under “Add a new sitemap”, enter the address/URL of your sitemap.

You can also check on the Google Search Console which pages have been indexed, which pages were excluded from indexing, and if there are pages with indexing errors that need to be addressed.

There are 3 main formats for sitemaps, although some search engines may accept other formats as well (Google Webmaster accepts 9 different formats). The most common formats are:

- XML: XML is the recommended format for most sitemaps. It is widely accepted across different search engines, and it is easy to generate using an XML sitemap tool. It is one of the easiest formats for search engines to crawl.

- RSS: RSS feeds may be created automatically through a blog site. It’s a subtype of the XML sitemap.

- Txt: The simplest and easiest sitemap format to create is the Txt sitemap. However, you sacrifice functionality for convenience. You can’t add metadata to a Txt sitemap.

Publishing Posts: Indexation and Traffic Perks

How often you publish posts is also quite important. More content = more value = more potential clicks. Not only does it often equate to more clicks, but it also plays a part in getting crawled more often (read, provided that the content isn’t poorly written or formatted (orphaned content and no internal linking would be an example of poor formatting). I recommend reading Shout Me Loud’s tips for increasing crawl for additional info on specifically increasing the crawl rate of your site.

A HubSpot study showed that sites who published at least four times a week had 450% more leads than those who published 4 or less posts a month. But publishing every day may not be realistic, especially if you’re a small to medium business owner with limited time and budget for SEO.

While you should aim to publish as much as possible, stick to a publishing schedule you know you can commit to, even if it’s just once a week. Try to publish at the same time and day of the week so that your readers know when to check back for new content.

Once you hit publish, though, that isn’t always the end of it. You can (and should) update old posts so that they stay relevant and useful to your customers.

For example, in a few years, this guide to advanced SEO will likely be outdated. To keep it fresh and accurate, we’ll need to update it to fit new SEO standards. Instead of writing the article from scratch, we can save time and effort by updating the numbers, removing inaccurate information, and adding new info.

Every once in a while, look at your lineup of posts and check to see if any could use a rewrite. We recommend that you revamp articles that:

- Have low traffic or CTR, even with a lot of high-volume keywords

- Evergreen articles with outdated information

- Comprehensive educational posts that aren’t getting proper backlinks

Section 4: Speed and Security

In 2018, page load times officially became a ranking factor. It’s about time, too; studies have shown that more than half of mobile users abandon a site if the loading time exceeds 3 seconds.

As internet visitors become used to a constant influx of information, their attention span and patience decreases. Optimize your site for mobiles and speed to keep both search engines and your visitors happy.

Because Google’s goal is to give users the best experience possible, it makes sense that faster sites will be rewarded with higher rankings. Just like content, though, speed isn’t the end-all, be-all. You also have to consider if your site is at its most optimized, a.k.a. if it’s the fastest it can be, or if there are things slowing it down.

Have you ever quit loading a website halfway through because it took too long? In a world where information is so readily available, sometimes a few seconds can spell the difference between you and your competitor snagging that sale.

Studies have shown that bounce rate significantly increases after a mere 3-second wait. Improving your website speed will keep more visitors on your site for longer, improving not just your SERP ranking but also your conversion rate.

You can use a free tool like GTMetrix or Google PageSpeed Insights to find out what your page loading time is, and what your opportunities are to lower that number.

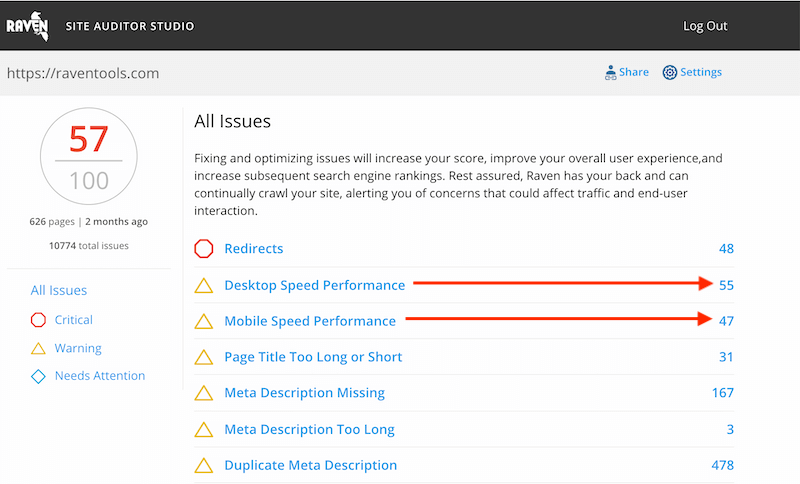

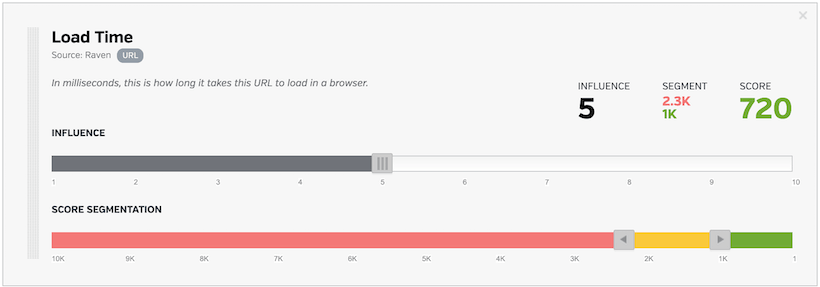

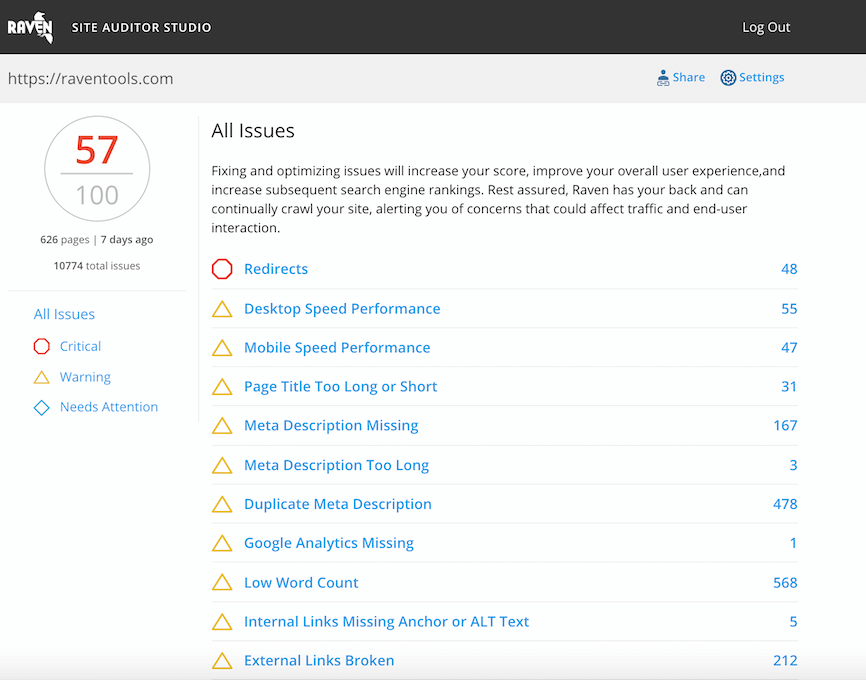

Since speed is so important to auditing your website, Raven includes detailed insights into our audit tool. You can also use the Website Auditor to identify page issues.

https://auditor.raventools.com/#/s/11ul5vav7 to see what a detailed report looks like in the Raven Website Audit Tool.

Some of the most common solutions to a slow website are:

- Compressing visual content like images and video. Large files take longer to load, slowing down your site. Compression minimizes the file size without sacrificing image quality.

- Using a faster web host. Your web host can set limits on your bandwidth. Private servers from premium hosts are more expensive than regular hosts, but they are often much faster.

- Hosting your files on an external network instead of embedding files. This allows you to display images and video without bogging down your web speed.

- Using accelerated mobile pages that reduce mobile page loading time to a fraction of a second.

- Switching to a cleaner, less bloated template with minimal code.

On top of speed, there’s also the issue of security. While Google hasn’t explicitly said that website security is a major factor, it does provide a better experience for your site visitors.

When a website has an SSL certificate, customers feel more at ease. Users will think twice about clicking on an unsecured site because their private information may be at risk.

SSL Encryption

Secure Sockets Layer (SSL) encryption offers a higher level of security and safety for users. When a site has SSL encryption, the data that passes through it cannot be tracked or stolen by malicious third parties. It also protects information from being corrupted as it is being transferred.

You can spot an SSL-encrypted website by looking at the URL bar. If there is a padlock next to the URL, and the URL starts with HTTPS instead of HTTP, then it has SSL encryption.

While we can’t say that SSL encryption is a major ranking factor, we know for certain the SSL is a ranking factor. As of the Oct. 2017, half the web is secured. I haven’t found solid statistics on updates to this number, but its safe to say with Google’s strong arm policy, everyone will move to SSL if they care about web traffic.

It’s quite easy to get SSL certification. You just have to get an SSL certificate from a certificate authority (there are both paid and free certificates). Once your site’s been verified, you can install your SSL certificate.

Section 5: Mobile SEO, Featured Snippets, Structured Data, and Voice Search

(hint: all of these things are intertwined)

The new age of search will be dominated by mobile. The advent of mobile first indexing is just the beginning. Featured snippets and voice searches are tailor-made for mobile. The UI of the SERP in mobile makes it seem like featured snippets ARE the SERP.

Voice Searches literally only read out the information from those little boxes, so all of a sudden, you should really (like REALLY) be focusing on mobile optimizations.

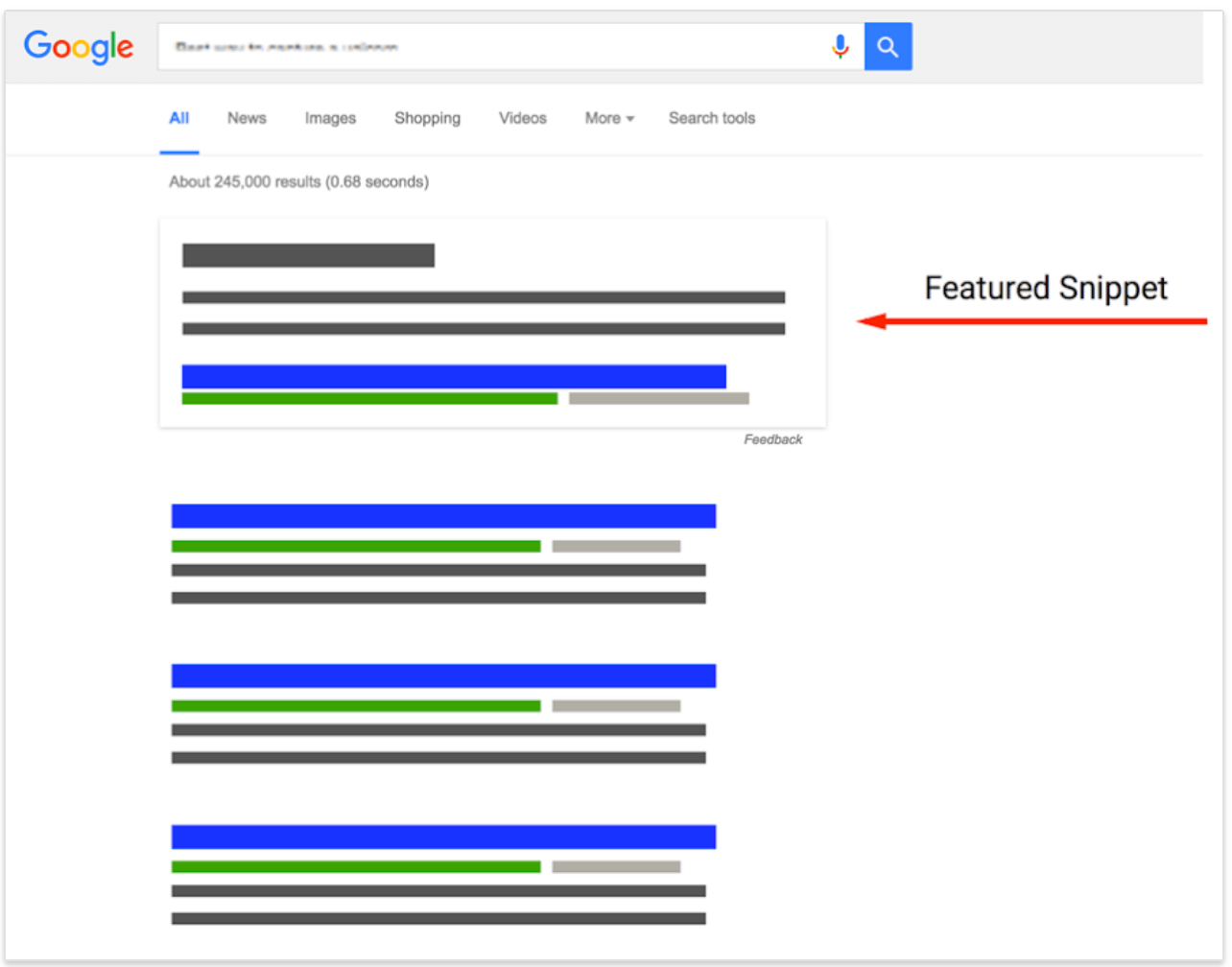

Rank Zero

You already know that making it to the first page is important, and getting that top spot is even better. But in recent updates to the Google algorithm, there’s another rank to aim for—the featured snippet.

Utilize advanced SEO techniques to gain ‘Rank Zero’ for target SEO keywords.

Featured snippets are small bits of information lifted directly from a search result that answer the user’s query. They can be in paragraph, list, or table form. I dig into this in detail in my Rich Snippet Visual SERP Guide.

These snippets take up the very top part of the search engine results page, appearing before the #1 result. This is why it’s called rank “zero”. On a mobile device, the features snippet has MASSIVE importance as it occupies a

So far, only about 12.9% of searches even have a featured snippet, but it definitely “steals” a lot of clicks and views from the #1 spot.

Getting to the #1 organic search result is tough enough, but earning the coveted “rank zero” is even harder. Featured snippets are hard to achieve but give great exposure to those who manage it.

To increase your chances of landing a featured snippet, there are a few ways you can optimize your content.

First off, featured snippets always come from the top results. You don’t have to rank number one or even number three, but you do need to be on the first page to get a shot at it.

Second, create short and digestible “snippets” of information for relevant ranking keywords. Most rank zero content answers a question in a short and succinct way, like “what is SEO”

Rank zero snippets are usually in the 40-60 word range. Format your content so that it can be divided into these chunks. Most featured snippets are in paragraph form, but bulleted lists and tables can also be featured.

Voice Search Optimization

Alexa. Cortana. Siri. What do they have in common?

More SEO opportunities.

This section summarizes our previous post on optimizing for Voice Search, so if you’re wanting more detail, I suggest you check it out when you’re done here.

Voice command technology has made huge leaps in recent years,resulting in 47 million Americans (and millions more people around the world) having some sort of smart speaker/device in their homes.

Through these devices, they can automate parts of their daily routine, do “screenless” online shopping, and search for information without ever having to look at their phones or computers.

Currently, voice searches make up 20% of all Android Google searches. That number is only going to grow, so there’s no better time than now to optimize your content for voice searching.

Here are some of the most common characteristics of a voice search result:

- Voice searches are mostly taken from the top 3 results of the page.

- Voice searches are often in the form of a question, such as “what’s the difference between camembert and brie” or “what time does Taco Bell close?”

- Search results that contain both the question and the answer in the content are more preferred for voice search.

To write voice search-optimized content, include the actual question somewhere on the page, followed by a short answer. An FAQ section to your posts will make this easier for you, the reader, and the smart software.

Mobile SEO

We can’t stress enough how important it is to have a mobile-friendly website in 2021.

The big push for mobile-first indexing started in March 2018. Google announced it would be prioritizing sites that have a mobile version or a responsive site that worked well on mobile devices.

If you think about the fact that more than half of all Google searches are done on mobile, that shift makes much more sense. Google will, of course, rank mobile-friendly sites higher since they can provide more value to users on the go.

When optimizing your site for mobile, you first have to analyze your current site. Google has a free tool that tests how mobile-ready your content is and what you’ll need to improve.

Some sites may have a mobile version of the site, but SEO experts agree that a responsive site is better. Responsive sites use themes that adapt to the device it’s being viewed on. So a responsive site will look just as good on mobile as it does on a PC—no extra coding necessary.

Mobile optimization also involves creating mobile-friendly content that is viewable and easy to read on a small screen. This means fast loading times, large text, and proper formatting.

Lastly, we need to cover structured data so you’ll know how to properly format your data to get snippets and to appear in voice searches with a greater frequency.

Schema Markup

Schema markup refers to code that helps search engines understand not just the text itself but the meaning of the information, and what kind of information it is. Instead of Google seeing “3PM, 13 March 2020, Carnegie Hall” as text, it will see it as a time, date, and location respectively.

Marking up your information helps Google give users information that more accurately aligns with their search intent. There are hundreds of markup categories, like price, TV show, name, and so much more.

It is extra work, especially if you have existing content that you need to mark up, but is it worth the effort? All signs point to yes.

Posts with schema markup average a whopping 4 positions higher in the results page compared to plain text/non-schema results.

In a battle for first page and rank #1, those 4 places could be a major gamechanger. Also, including schema markup increases your chances of landing a featured snippet in rank zero, further boosting your rank.

Raven has a 10 best Schema Markups post that may benefit some of you.

Section 6: Content Strategy and Keyword Strategy

It’s been said many times and many ways, but content truly is king. Generally, the better and more useful your content is, the more Google is likely to rank you higher. However, it’s not just about good content, it’s also about optimized content—the right keywords in the right way.

Keep in mind, content isn’t just important for SEO, its paramount if you hope to convert. People like Kyle Roof prove that Google can’t read content, but they can understand keywords.

So when I say “content in king”, I’m saying that content is king for conversions and its important if you hope to build a web that looks natural, while containing x amount of keyword 1, and x amount of ___ term.

Word Count

One of the more contested aspects of web content creation is the length. Is it better to have short, concise articles or long ones? There are staunch advocates on either side, but the answer is not as straightforward as you might think.

Looking at content that does rank, most top search results tend to be on the longer side. This, of course, varies per industry, topic, format, etc., but the general average word count of over a million #1 results is 1890 words.

Now, that doesn’t mean that 1890 should be your target for every post. There isn’t a magic number that will automatically boost your rankings.

Longer isn’t always better. A 500-word blog isn’t inherently less valuable than a 2000-word article. It really depends on the quality. There are well-written short blogs that are leagues better (and rank higher) than their much-longer counterparts.

The reason longer content tends to rank higher is because longer content tends to be more in-depth. The writer has more room to insert relevant keywords, thoroughly discuss important concepts, and provide more value to the reader.

No matter how long your content is, make sure to demonstrate your expertise, authority, and trustworthiness. Quality first over quantity.

Content Formatting

Use headers (H1, H2, H3, H4, etc.) for your on-page content. Not only will this help bots understand your content better, but it breaks up walls of texts into smaller blocks for your readers. Stick to short sentences and paragraphs that users can quickly scan through.

Formatting can help draw attention to and emphasize certain phrases. Use it sparingly on your article’s most important points.

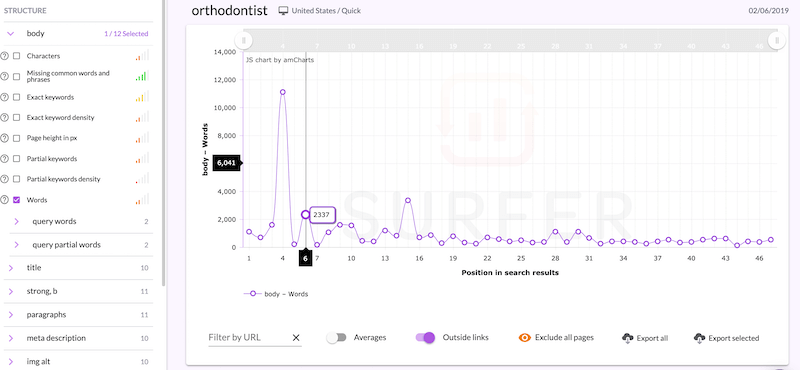

Like I’ve mentioned before, the tool I personally use for checking how to format, is Surfer SEO. I like to review what other people are doing with good on page practices for my particular target keyword, and then I try to apply what they’ve done to my own content.

SEO is a lot of reverse engineering the SERP in 2019.

Keyword Strategies

Keywords, keywords, keywords. Many ‘advanced’ SEO guides will repeat this ad infinitum. But the real question is, what keywords do you use?

The first level of keyword research is listing down the most important search phrases that you want to rank for. This includes your business name, the name of your products/services, your location, etc. It also includes words related to your niche/topic.

Once you have an initial list of keywords, you can use an online tool to find other keywords that you may have missed. These tools make suggestions for related keywords while also giving you insight into the monthly search volume of those keywords. Gotch SEO has a great 19-minute video covering the topic, if you’re looking for a video review. This is going to help you create a forecast or prediction of your potential SEO outcomes.

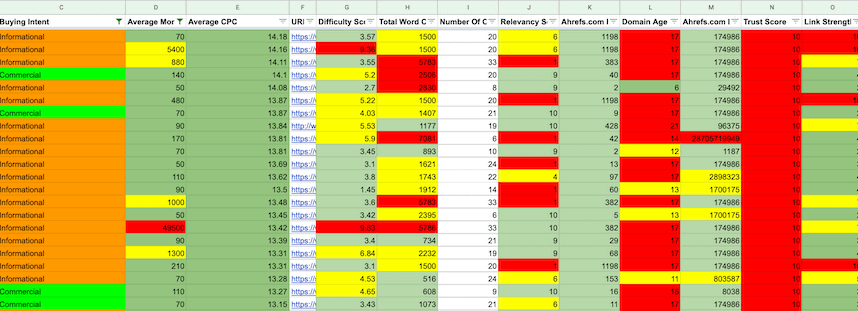

My personal google sheet ends up looking like this:

Here are other tips for SEO keywords:

- Avoid broad, generic ‘short-tail’ keywords like “wine” or “SEO. It can be super difficult to rank for those words due to their competition, plus it doesn’t target specific search intent.

- Use long-tail keywords that are specific to your particular business. Instead of “burgers”, try “best Angus beef burgers Brooklyn”, for example.

- Use an LSI (latent semantic indexing) keyword tool to find keywords that are similar to or related to your main keyword phrase. Thanks to Google’s increasingly-sophisticated algorithm, you can rank for keywords even without using an exact match phrase.

- Search for competitor keywords. This will help you identify new queries you could be targeting, improve your SEO strategy, and gain an advantage over your competition.

Advanced SEO Content Guide

Really good content isn’t easy to accomplish. It requires time, effort, and some expertise. Focus on creating high-quality content that your customers will actually like, and the rankings will follow.

Writing Techniques

Hook your readers. The longer they stay on your blog, the better. Google does notice how much time visitors spend on your site, so how do you encourage people to keep reading?

Write high-quality, interesting articles that provide solutions to your customers’ biggest challenges. Write about things that actually interest your target market. If you’re a car-related business, your customer base wouldn’t be turning to you for advice on cooking a steak. Cater to their needs and address their pain points.

Get creative with your work. Captivate your audience by using transition words, phrases, or sentences that pull them into the next line.

Use the attention-grabbing “bucket brigade” technique to generate interest in the next sentence:

- Here’s something you might not know:

- The truth? It’s _____________

- Listen:

ALWAYS use proper grammar, spelling, and punctuation.

Even if you have a fun or quirky business, your content needs to be readable and professional. But don’t be scared to inject some personality into it! Be witty, be funny, or be playful—if it fits within your brand image.

Make sure the writing is smooth and flows well. Use short sentences and paragraphs to make it much easier to read. If you have a particularly long or complex post, try to divide it into shorter easy-to-understand chapters.

Content Ideas

There is no limit to the kind of content you can make. Listicles, roundups, guides, checklists, videos, and infographics all make great for great posts.

No matter what industry you’re in, you could make evergreen content. Evergreen content is content that is relevant to your customers all the time and isn’t dependent on trends or current events. TImeless resources could include comprehensive guides, FAQs, definitions of concepts, and others.

Incorporating Keywords

How you use keywords can really make a difference.

The most important thing is to use them organically throughout the content. Keywords should never break the flow of your content. It should feel natural.

All too often I hear other writers expressing frustration about integrating SEO keywords into their content naturally.

Here are 4 sure-fire techniques to implement difficult keywords:

1. Using Commas

Let’s say your keyword is ‘Australia Ultimate Frisbee’ — integrating the keyword naturally into your text without feeling ‘out of place’ can be tough… by using a comma IN the keyword, you can integrate your keyword creatively.

Keyword: Australia Ultimate Frisbee

- As the summer kicks on in Australia, Ultimate Frisbee is becoming a popular pastime for beach goers this year.

2. Using Stop Words

Search engines have become significantly more advanced in recent years and are capable of filtering out words such as ‘for’, ‘of’, ‘in’ or ‘but’. Keywords that help structure your content grammatically without having an effect on the actual intent behind the keyword.

Keyword: Dentist Perth

Simply integrating your keyword into your content as ‘Dentist Perth’ would come across as poor English. Using the stop word ‘in’ helps make the keyword grammatically correct while still keeping the original intent behind the search.

However… there are exceptions to this. If the stop word changes the INTENT behind the search, Google will take this into consideration when crawling your content.

The search phrase [Notebook] would return results for laptops.

Whereas the search phrase [The Notebook] would return results with the dashing Ryan Gos.

3. Using FAQs ⁉️

Question keywords such as ‘How to do x’ can be tough to implement throughout your content naturally… without coming off as jarring or repetitive. By utilising FAQs, you can include your keyword and keyword variations easily through the headings and body text.

4. Using Conversational Writing To Your Advantage

Writing conversationally can be an effective method for implementing long tail keywords naturally… ESPECIALLY question long-tails.

Keyword: How Hummus Is Made

- So if you’ve been wondering how hummus is made then this is the article for you.

Integrating keywords into your content is a constant balancing act to optimize your content for both your readers AND the search engines. Both Google and your reader can tell if you’re just trying to stuff in your keywords as many times as possible. Keyword stuffing used to be a popular practice, but Google’s gotten much better at picking up on it.

Headline Writing

Engaging headlines encourage readers to click on and read your article, so it’s important to make it creative and stand out.

(Tell me right now you don’t want to read this article)

Use your main keyword in the headline, but use it sparingly. Don’t overload the headline with keywords. Keep your headline to 65 characters or less for a short yet catchy title.

Try out different styles or voices for the headline. Come up with 2-3 different options with slightly different approaches.

Learn from these headline writing hacks:

- Use numbers in your headline. “7 Things To Consider When Buying A House” is interesting and sets reader expectations. Studies have also shown that articles with odd numbers get more clicks than those with even numbers.

- Take a cue from clickbait headlines like “You’ll Never Guess This Secret To Perfect Skin!”. Mystery, intrigue, and surprise are all elements of a great headline.

- Let people know that it is timely and updated content. “Fashion Trends For Fall 2021” is a better headline than the more generic “Fall Fashion Trends”.

Section 7: Backlinks and Link Building

Backlinks serve many purposes. First, promotion on another site could mean more traffic for you (and more paying customers). Second, it signals to the search engine that your content is valued by other members of the online community. Third, it helps the algorithm crawl new pages.

Let’s focus on the second purpose. When people link to your content, it acts as a vote of confidence. It lets people (and Google) know that they trust your content and want to share it with others.

Quantity matters, but so does quality. One backlink from a popular or established site is much more valuable than possibly even dozens of backlinks from low-quality sites. It only makes logical sense that a backlink from the New York Times would matter more to the algorithm than your neighbor’s small blog.

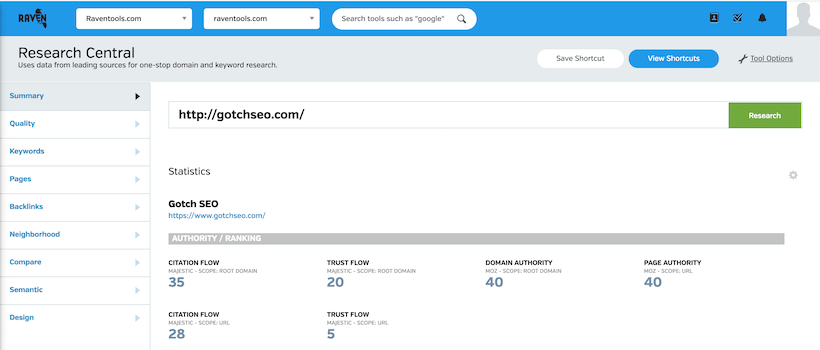

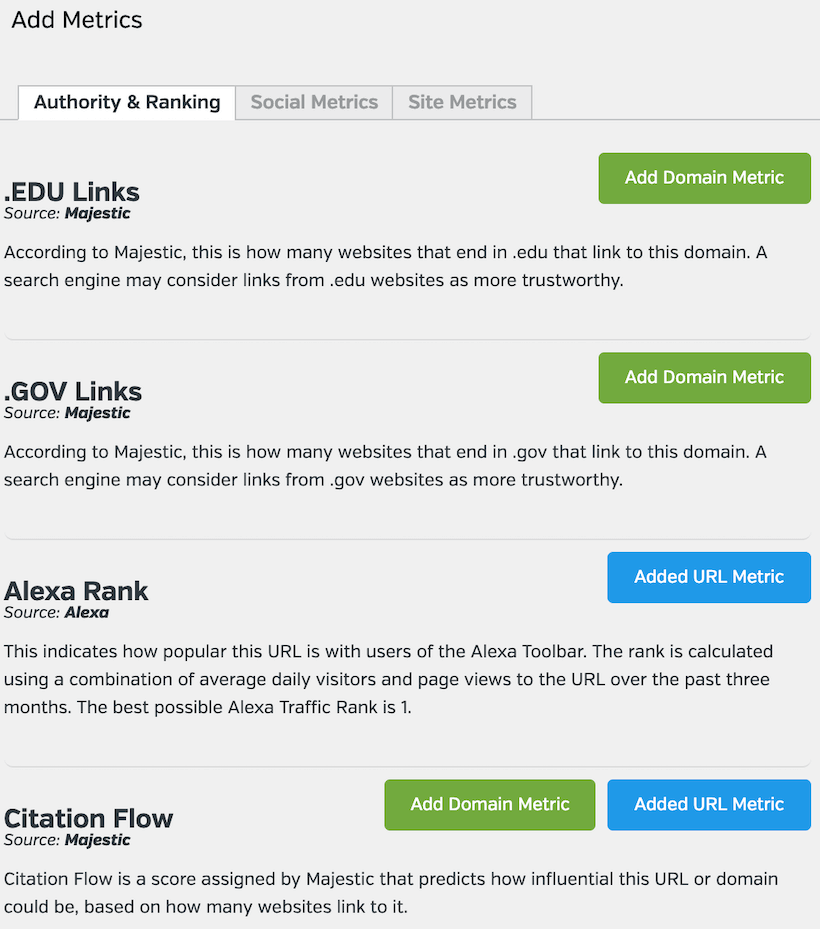

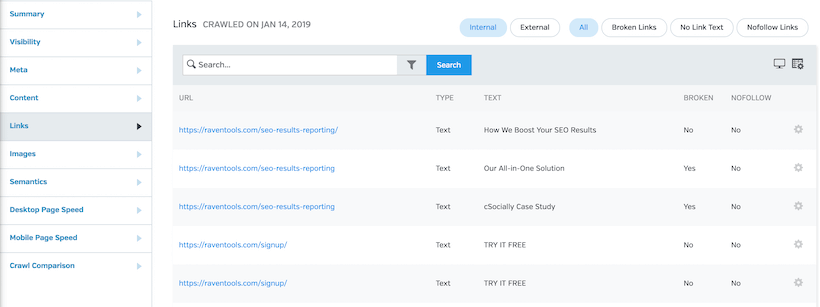

If you’re trying to evaluate quality, Raven has two different tools to assist. One is based off of Moz and Majestic, and the other is a custom URL and domain grader, which allows you to pick from about 20 metrics for your eval.

The custom grader is under “quality” and looks like this: (I love it, especially for niche research for affiliate SEO).

Here’s a few examples of what it looks like –

Once you’ve created your metrics, you can select the importance of the metric and then use the drag and drop editor. Feel free to use Raven’s default score as well.

By the end, you’ll have a score somewhere between 1 and 100. And just like that, you’ve evaluated a domain or a URL based on what is most important.

SEO Linking Strategies

Backlinks are massively important, but because backlinking is about inbound links, i.e. links from other websites to yours, it’s not 100% under your control.

Backlinking is one of the more social aspects of SEO because it hinges on your engagement within a community of bloggers and influencers. This makes backlinking one of the more complicated SEO strategies.

However, there are many techniques you can use to build a strong network of quality backlinks. Here are the best white hat methods to use for 2021 backlink acquisition. (I’ll dig into a white hat and black hat towards comparison towards the end).

Original Data

One of the best ways to get backlinks is, incidentally, also the most difficult. Creating absolutely unique content that can’t be found anywhere else—like doing your own research or conducting a survey—is an effective way of providing value that nobody else can.

New information is always valuable to an industry, and your findings will quickly get picked up by other people in your community. Blogs and articles will use your results in their own content, which means hundreds or even thousands of potential backlinks for just one post.

Guest Blogging

Guest posting is often considered the backbone of backlinking, and for good reason. Not everyone has the time or the funding to do original research, but most people do have the time to write a post for someone else’s blog.

The process of guest posting is actually quite simple. First, you land a guest blog spot on a site by reaching out to websites with a similar target market to yours.

Next, you create original, engaging, useful content for their blog. In your content (or your author’s bio section), you can link back to pages on your website, earning you a backlink.

There are many benefits to guest posting besides backlinking. You use another blogger’s platform to expand your own and gain access to their regular roster of readers. You also get to establish yourself as an authority in your field. Lastly, it’s a great way to network and builds relationships with other professionals within your community.

Personally, I like to use some free methods before I go into the paid route (paid by either paying someone to find opportunities or paying agencies to give me links via guest posts). I recommend reciprocal links (don’t go too heavy from one site). I also recommend facebook groups (this link goes to my personal choice) that offer people opportunities to build links together by just being nice to each other.

Features

Getting featured by another business or website can expand your reach while giving you the backlinks you want.

You can get a feature from a news site or community blog in many different ways. One way is to join a community podcast where you discuss topics related to your expertise. The podcast host will often link back to your site so that listeners can follow up and learn more.

Another way is join offline events. Participate in speaking engagements or host a conference; you’ll get a ton of backlinks from the news coverage, social media pages, and many others.

Like guest blogging, you can’t wait for opportunities to happen to you. You need to assertively seek out opportunities to get features.

The best way to do it is through PR. When you release a new product or have a major company announcement, write a press release. Send it to the relevant media outlets. Reach out to micro-influencers to try out your products/services. Be an active participant in your own promotion.

You can also pay for SEO press releases and it’ll generally cost you about $100 for 300+ press releases, which isn’t bad. If you’re still reading, I’ll add that you can also pump these links up with some T2 link building, but I won’t dig too deeply into that particular rabbit hole. That SEO Technique will be a post for another time.

Forum Posting

As accessible as blogs have become, some people prefer the interactivity and realness of a forum. Many people flock to forums to ask questions and get advice from actual people, often in real time.

There are general forums like Reddit or Quora where you can find every kind of community imaginable. There are also interest-specific forums like for bodybuilding, cooking, automotive, and more.

Forums are a great venue to meet your target customers, engage with them, and build trust with your brand. You can also answer their questions and gain insight into their biggest problems.

When posting on a forum, it’s important to not to be aggressive with the promotion. You are there to build relationships and provide solutions. Link back to your blog when it adds value.

Niche Edits

Niche editing is the process of adding a link to your site on existing content. Business love this technique because you can earn a quality backlink without having to write a guest post or do any extra legwork.

SEOs also love this SEO technique because Google (and other search engines) like aged content, a.k.a. content that has been around for a while. A backlink on a popular existing post is worth a lot more than a backlink on a new post! Done right, niche edits can get you much more valuable backlinks for significantly less effort than a guest post.

You can start getting niche edits by searching for sites with similar content to yours. If you work in the food and beverage industry, for example, this could involve going to recipe sites and food bloggers. Ask the site owners and bloggers if they could link to your content (and offer to link back to them as well!).

Many webmasters are actually quite happy to add links to other sites. They may even be more willing to do that than accept guest posts, since they don’t need to screen, edit, or upload anything new.

Mention Tracking

Mention tracking is sort of similar to niche edits, except much more specific to your business. Whenever someone mentions your business or product/service online, it contributes to your overall reputation. But you could be getting more out of that mention if they linked back to your site as well.

You can scan the internet for unlinked mentions of your brand. If you find a news feature or blog that mentions you, you can reach out to them, thank them for the mention, and request if they could add your link.

You can even automate this process for the future by setting up an alert system that notifies you whenever someone mentions you online.

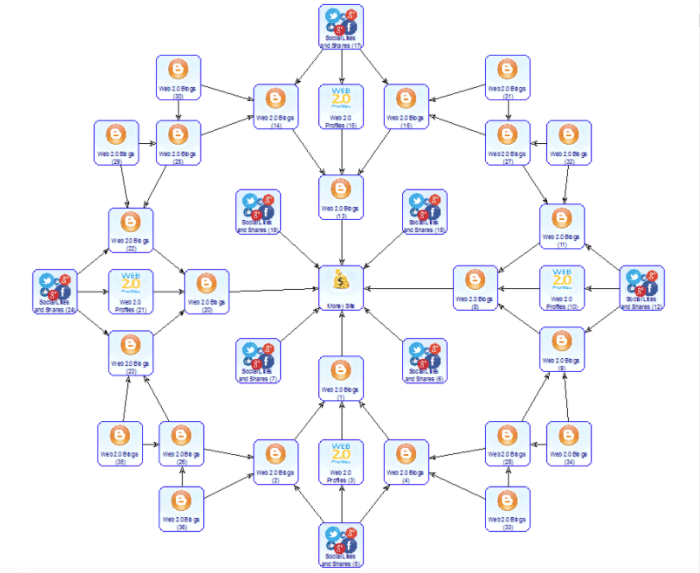

Tiered Links

With quality backlinking, you try to get a good link from a reputable site. Tiered linking is sort of the opposite of that; instead, you get multiple links from small low-authority sites to signal boost a blog post on a bigger site. Basically, it looks like the image below.

Basically, you have your target post on a popular or big website (tier 1). You link to your tier 1 post from 4-5 “tier 2” posts on smaller websites. You then link to those tier 2 posts from even more niche tier 3 sites. And so on and so forth (although usually tier 2-3 is more than enough!).

The backlinking from the lower-tiered sites improve the domain authority of the higher-tiered sites. The tier 3 links improve the tier 2 sites, and the tier 2 sites improve the tier 1 site. At the end of it, you have a “supercharged” tier 1 post whose backlink to your site is worth much more.

Tiered link building is a bit tricky. While it’s not strictly an unethical practice per se, tiered linking can be done in a way that manipulates the algorithm and leaves you vulnerable to harsh penalties from the search engine.

The best way to do it is to follow webmasters guidelines and focus on creating value. The secret is in approaching niche blogs for quality tier 2 backlinks.

Don’t rely on low-value, spammy websites that were built for the purpose of tiered linking. Instead, post content that benefits everyone involved:

- You, because the backlinks can improve your SERP ranking

- Your readers, because they learned something new from your content

- The ssite masters because hosting good content boosts their credibility

Private Blog Networks (PBNs)

PBNs are similar to tiered linking in a sense. Both practices involve using tier 2 sites to build up a tier 1 post. However, PBNs take it up a notch by using high-authority domains to create super powerful tier 2 sites. Another difference is that instead of linking from third party sites, you link from sites you control.

We’ll go into detail later, but here’s an overview of the PBN process:

- Secure an expired domain with high domain authority

- Create content on that site

- Link back to your main post to award it a quality backlink

- Not get caught by Google

Yes, you read that last one right.

Using PBNs is an effective tactic in SEO. With backlinking as one of the most important factors in your SERP ranking, there are few things better than multiple quality backlinks from trusted sites.

However, this is considered an advanced black hat SEO technique, which means that there is a potential risk of getting penalized if Google finds out you’re using PBNs.

This doesn’t mean you shouldn’t use PBNs. This just means you need to be smart about it.

It’s a lot of work (and will cost some money), but the results are clear: a faster and more effective way to get the ranking you want.

Here’s how to build PBNs (the manual way) that ACTUALLY WORK in 2021:

Step #1 – Search for expiring or expired domains with high authority.

How do you find these domains? You have a lot of options, such as getting a domain broker or participating in domain auctions. The biggest auction and broker sites include NameJet, GoDaddy, PureQualityDomains, and SnapNames. You can also scour DomCop, WhoIS Domain Lookup, and PBN HQ for a list of expired domains and metrics.

Don’t worry about getting domains that are related to your business. The most important thing right now is getting domains with good metrics. You can customize the website later to make it work for your purposes.

TIP: Don’t buy all the domains in one day, and make sure you use different emails and names for each of the sites. The goal is to make each domain seem distinct and unrelated to the rest.

Step #2 – Set up your sites.

It’s good to diversify your portfolio, so to speak. Use different web hosts to prevent Google from associating your domains with each other.

Use different themes and layouts. Not only will this help you reach wider and more varied audiences, it’s also a great way to convince the search engine that your PBNs are legitimate.

There are many different ways to set up your site. It could be a blog, a business site, or a city-based website to boost your local SEO.

TIP: The website needs to feel as real as possible, so include an “About Us” section and other pages. This may involve creating a variety of personas for each of PBNs as well as address/contact information. The personas don’t need to be experts in their fields, that makes it difficult to create content. Instead, introduce them as bloggers who just want to share their experiences and knowledge.

Step # 3 – Create content for your PBNs.

Be creative about how you can relate your acquired domains to your website.

If you run a home cleaning service, for example, you might not know how to link back to it from a domain like femalefashiontrends.com. But it’s not impossible, you just have to think outside the box. You could write about how to keep your clothes and closet space clean, then link back to your site.

Whether you choose to keep your domain’s old niche or try to re-work it into something relevant for your business, the most important thing is that you regularly create content and put in contextual links back to your site. You could do reviews, listicles, or regular articles. It’s up to you how you want to tackle it.

This step is usually the hardest. You have to regularly come up with quality content for your PBN sites. Plus, you have to use different “voices” and write on a wide range of topics.

However, you don’t need to write super long articles; 500-2000 words per post should be more than enough. And you only have to publish weekly or every two weeks.

TIP: Do not use an SEO content generator. Those may save you time, but they create low-quality content that Google will easily sniff out. The content needs to be convincing to both users and search engines.

Step #4 – Link back to your money website, or the website whose SERP ranking you want to improve.

Be careful not to overdo it on the links; otherwise, your PBN will look like an obvious link farm.

Stick to 1 or 2 links to your money site per article. And don’t just use any anchor text; you have to choose them carefully. Try to use keywords as anchor texts whenever possible, but don’t use the exact same anchor text more than once.

For example, if your money site is for a photography studio, and your keyword is “professional photography”, you could use the following variations as anchor text:

- Photographer for hire

- How to take professional photos

- Photography blog

- Best photography studio

As much as possible link to relevant pages or the homepage. However, you don’t want to link only to your site. Mix it up by linking to other authority sites.

TIP: With multiple PBNs, don’t link to every single one of your money sites from every single one of your PBNs. And not every SEO post should link back to your money site; throw some filler articles in there to make it more believable.

Running PBNs takes almost as much time and effort as running your actual site, but the backlink boost could be worth it. Just be careful to keep your PBNs separate from each other to avoid getting penalties.

Spammy Links

Not all links are good links. Your backlink is only as good as the quality of the domain that gave you that link. If it’s a bad website—full of spam, low quality, or otherwise irrelevant—there is a chance that the search engine could think that you’re part of that bad website’s network…and then get penalized for it.

To avoid this, you need to disavow spammy links or backlinks from sites that you don’t trust. You can use tools like Google Search Console or Raven Tools to find unwanted links and remove them from your site.

Section 8: Black Hat SEO Vs. White Hat SEO

Before we move on too far past backlinks and content on this big ole monstor guide on advanced SEO, we have to talk about ethical and unethical SEO practices.

In the world of advanced search engine optimization, it’s not really a question of “legality”; it’s about what’s effective.

With this in mind, most practices can be categorized into one of three groups.

First, there’s white hat SEO. White hat SEO refers to all the tactics and techniques that are search-engine approved. The strategies outlined here in this guide are all white hat SEO strategies; they follow the guidelines search engines have put in place to protect their users and provide them with high-quality content.

White hat SEO is characterized by practices that raise your profile organically. You have to earn your ranking by building better content, providing value to site visitors, and putting users first.

On the other hand, there are black hat SEO practices. These are more shady methods that search engines frown upon. Black hat techniques try to game or manipulate the system without providing value.

Below, we go into detail about the various black hat practices you need to avoid.

Link Buying

Backlink buying involves paying someone or offering goods/services in exchange for a backlink.

Link Farming

PBNs and link farming use the same tactics, except link farming doesn’t necessarily involve high-authority sites or expired domains. Link farmers build up tons of spammy, low-quality websites to build powerful tier 3, tier 2, and eventually tier 1 backlinks.

Cloaking/Redirecting

Cloaking is the act of making a page seem like it contains one thing when it really contains another. Sites lie to search engines about the contents of the page so they can rank for unrelated terms.

301 redirecting is another black hat technique similar to cloaking. The title and description on the search engine results page will show that it is about one topic, but when the user clicks on the link, they get redirected to a completely different—and unrelated—page.

Keyword Spamming

Stuffing your content full of keywords is not a great way to boost your SERP ranking. One, spammed keywords tend to sound unnatural or forced which increases the bounce rate of your page. Two, Google is actually quite good at catching (and penalizing) spammy sites. An example of this would be for me to repeat the words “Advanced SEO Techniques” over and over.

See what I did there? I just added another keyword to stuff the article while talking about keyword stuffing. Woah…meta.

Another ineffective black hat tactic is using invisible keywords. Keywords are added in the text so it is read by the search engine, but are otherwise hidden from the user by manipulating the color, size, or placement of the text.

Content Copying/Scraping

Long story short, content scraping is plagiarism. It is directly lifting parts or the whole of a text from another site. Content scraping is one of the reasons that Google has been cracking down on duplicate content.

Unlike some of the other advanced techniques (which are merely unethical), content scraping is actually illegal since you are using someone else’s intellectual property as your own.

Finally, there’s the third category: grey hat SEO. This category, as the name implies, falls somewhere in between white and black hat SEO. These are practices that are not strictly discouraged or penalized by search engines but are also not explicitly encouraged.

The issue with grey hat is that it toes the line of unethical SEO practices. It may not be penalized today, but it could very well be penalized in future updates to the algorithm.

Both black hat and grey hat SEO techniques are risky, and the consequences far outweigh the benefits. White hat SEO practices are sustainable, safe, and protect you in the long run.

Section 9: Internal Links, URLs, and Site Structure

Internal linking is one of the few aspects of link building that you actually have complete control over. While linking to your own content doesn’t have the same pull as a backlink from a reputable third-party website, it can still affect your overall ranking in other, more subtle ways.

By including links to other content hosted on your site, you are encouraging users to spend more time reading and scanning through your blog. This also helps Google crawl your website and index more of your pages.

Another benefit of internal linking is that your higher-value pages (the popular ones with lots of backlinks) can actually pull up the ranking of your lower-value pages just by linking to it. This is referred to as “link juicing”, where the domain authority of one page “trickles down” into other pages.

Another benefit of the Raven Tools Site Auditor is the ability to look at all of your anchor text.

Download to csv, and then filter and create your own visualization of internal anchor text links.

Silo and Site Structure

The site structure dictates both the search engine and the user’s navigational experience of your site. A good site structure makes it easier for your user to find the information they need. It also makes it easier for search engine bots to crawl and index your site.

Ideally, the structure—what your pages are and how they are related to each other— is planned out before the website is built to ensure that it is intuitive and easy. If you have an existing site, fixing the site structure should be a major component of your next redesign.

Siloing is one of the most effective ways of structuring a website, although it may not apply to all businesses. This involves grouping pages into categories and sub-categories. This works especially well for online shops that may host dozens or even thousands of different product pages.

You don’t have to do a silo-type structure for it to positively impact your ranking. As long as your pages are organized, easy to find, and don’t hinder the overall user experience, that’s what matters the most. I mentioned this above when I talked about centralized and decentralized structures.

URL Structure

Yes, even your web page’s URL matters for SEO.

The rule of thumb is that if a person can’t understand what the page might contain from the URL, then it’s a bad URL. If a human being can’t understand it, then a search engine won’t either.

Compare these two URLs:

sample.com/blog/2019/02/20/hskew9873-ssdmk.html

sample.com/blog/recipes/perfect-scrambled-eggs

The former URL tells you nothing about the content. The latter URL lets you know some very important information—what the page is about.

Here are some more tips on crafting good URLs:

- URLs are case sensitive, so make sure you don’t accidentally create duplicate pages under the “same” URL.

- Use hyphens and not underscores to separate words in your URL.

- Keep your URL as short as possible. Avoid unnecessary words like “and” or “the”, or multiple repetitions of the same word.

- Come up with a logical structure for future posts. You can have the URL flow from a category to a subcategory; use your sitemap as a reference.

EMD (exact match domains) still show ranking benefits, but many disagree on this, and essentially the two sides exist because some conduct tests, and some take Google at face value.

URLs are especially important for E-commerce SEO. Breadcrumbing becomes something that can really affect you if you don’t do it properly with a larger site, so make sure you’re naming URLs correctly and structuring them so visitors can understand their page path.

Section 10: Technical SEO

For this section, I’ll expand next in upcoming articles as it deserves its own mega post, but for now, we’ll cover images, video, duplicate content, robots.txt, redirects, nofollow tags, and canonical tags.

Images & Video

Visual content can catch the eye, make your article seem much more alluring, provide a visual aid to help customers understand complex information better, and give your readers a break from long chunks of text.

People are visual creatures, so make sure to use images, infographics, videos, and other kinds of visual content.

Infographics, in particular, can be an amazing tool to boost your search engine results page ranking.

Because they break down information into a digestible format, they are incredibly shareable. Infographics can also help you tell a story in a more creative way or help your readers visualize your point through data and charts.

On the other hand, video is an increasingly popular format. Compared to infographics, videos are much harder to produce. But because of that, it’s also more difficult to replicate and you won’t have much competition. They also increase dwell time on your site and reduce bounce rate.

Videos are also highly shareable, and many people turn to videos when they search. In fact, after Google, YouTube has the highest market share of searches out of all the other search engines.

Google acquired YouTube more than a decade ago, and has since been incorporating YouTube videos into the search engine results page. Some searches also yield video snippets as the rank zero result. Some advanced SEO testers claim that videos from youtube have ranking benefits, but I’m not entirely sure where I fall on that claim, so for now, I’ll just say that adding a video for various type of web pages will increase user dwell time on your page and does wonders for local SEO.

The kind of video content you can make largely depends on the business you’re in, but the following formats are hits in every industry:

- How to’s/instruction videos

- Product videos that promote the brand

- Live broadcasts for Q&As, interviews, seminars, etc.

Upload your videos to the major video hosting platforms like YouTube, Facebook, Instagram, and Video. Because search engines can’t crawl the video itself, make sure to give them more context in the description. It wouldn’t hurt to add one or two of your focus keywords as well.

Image Optimization

Google’s algorithm may be sophisticated, but it still cannot crawl visual content as well as it does text content. So you have to give Google a helping hand by including alt text. This tells the search engine what is in the visual content and how it works with the other content on the page.

Image optimization also involves resizing and compressing your image/video files so that they load faster, especially on mobile devices. Small file size doesn’t mean you can skimp out on quality, however; use the highest quality images/videos and format possible, like JPEG for images and MP4 for videos.

Personally, I trust my good ole panda sidekick for this, and I’ll resize the image through the native editor on my mac.

This image was 300kb, but with Tiny PNG, 70kb. I would hope most readers would know about this, but just in case, I figured it would be good to include.

Duplicate Content

On-site duplicate content will cause your rankings to dip. Search engines tend to penalize exact-match content to prevent sites from copy-pasting entire pages to artificially boost their rankings.

That doesn’t mean that the crackdown on duplicate content is only for malicious sites. Many sites have had their content bumped off the first page or even de-indexed because of duplicate content on their own sites.

How do you solve a problem like this? You can either delete the duplicate content or consolidate them into a single page. If you must keep all of the pages, you can use the canonical tag which we will discuss in the technical strategy section.

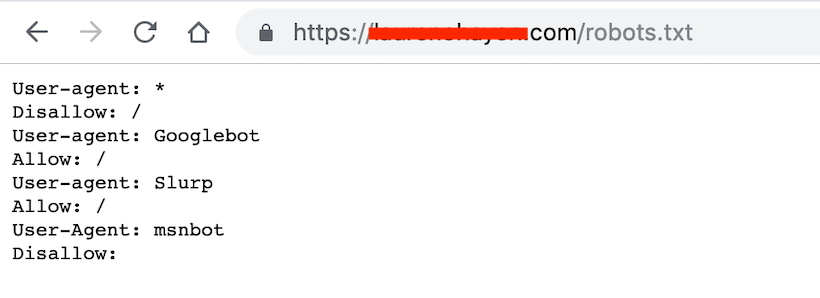

Robots.txt

The robots.txt file is an important tool in any webmaster’s toolbox. It tells the search engine bots how to crawl their content, which content they can’t access, or how long they have to wait before crawling the pages.

There are many reasons you wouldn’t want Google crawling certain pages of your site. This could include duplicate content that you don’t want to be penalized for, keeping some parts of your site private, and preventing a server overload when bots crawl multiple pages simultaneously.

The general format of a robots.txt file looks like this:

User-agent: [name of the bot]

Disallow: [URLs that the bot shouldn’t crawl]

It could also include one or more of the following:

Crawl-delay: [amount of time in milliseconds that the bot should wait before crawling]

Sitemap: [location of XML sitemap]

The robots.txt file is case sensitive and needs to be located in the top-level directory. It’s important to note that even if you have a robots.txt file, bots may ignore the instructions (especially in cases of malicious bots). Robots.txt is also a public file that anyone can easily access by adding \robots.txt to your root domain, so avoid using robots.txt to hide private information.

Keep in mind that the default setting for Robots.txt is for Google to not crawl your website, so make sure you are on top of this for new sites, and even for established sites, I’ve seen people who don’t realize what’s going on. Here is an example of a site with robots.txt disallowing one kind of bot while allowing another.

Just add /robots.txt to a url to see the file.

Nofollow Tag

Remember when we talked about outbound linking? If you link to a third-party website, you are giving them “link juice”. You are signalling to Google your vote of confidence in that particular site.

What if you don’t want to give them that backlink? What if you don’t trust the site, and don’t want to risk being seen by search engines as part of that network?

You use the ‘nofollow’ tag.

The nofollow tag tells the search engine that while you may be linking to a particular site, it doesn’t mean you are vouching for it. This prevents bots from following the link and crawling the site.

A nofollow HTML tag looks like this:

<a href=”http://www.example.com/” rel=”nofollow”>Anchor Text</a>

You’ll see sites like Wikipedia use this for just about every outbound link they have on their site.

Keep in mind that nofollows still have an influence on page rank. I tend to no follow competitor links for keywords if I happen to need to link to something they’ve done. I also tend to no follow non-secure sites.

Canonical Tag

As we mentioned in the duplicate content section, the canonical tag can be used when you have duplicate pages that you can’t consolidate or delete.

This tag lets search engine bots know that a specific page is a copy of another page. It also tells the search engine to not crawl the duplicate page, and redirect domain authority to the main page.

Canonical tags are most common for pages with multiple URLs, such as:

- Homepage.com

- homepage.com/home

- homepage.com/index.php

- www.homepage.com

- And so on and so forth

When using the canonical tag, be aware that search engines may choose to ignore it if the content is too different. It is best used on duplicate content or near-duplicate content where only a small element is different (location, price, etc.).

Also, make sure you’re consistent with your canonical tag. Do not put on Page 1 that Page 2 is the main page, then put on Page 2 that Page 1 is the main page. Likewise, do not canonicalize Page 1 to Page 2, only to redirect Page 2 back to page 1.

You would put the following HTML tag in the code of the duplicate page, where the link is the URL of the main page:

<link rel=”canonical” href=”http://thisisthemainpage.com”/>

301 Redirects

Even though the two are often conflated, a 301 redirect functions very differently from a canonical tag.

Whereas the canonical tag allows users to view duplicates of a page under different URLs, the 301 redirect tag completely bypasses the first URL and automatically brings the user to a second URL. 301 redirects also pass on all link juice/domain authority to the new URL.

There are a few reasons to use 301 redirects. One is to avoid duplicate content, similar to the canonical tag. Another is to fix “broken links” —when you change the URL of a page, but it’s more convenient to let users still access it from the old URL.

Note that using a lot of 301 redirects can significantly slow down your web speed. Keep it to a minimum or only when necessary.

Also, only use 301 redirects on related/similar pages. A user clicking on a search result for “top 10 romantic comedies” would not want to be redirected to content about construction equipment.

Last but not least, we’ll touch upon SEO for local businesses. This won’t include any groundbreaking advanced seo technique but it will cover the basics. Local SEO is one of those niches where I find immense value in paying consultants for their courses. The lessons are incredibly actionable, and you can go out and make a killing pretty quickly.

Section 11: Local SEO

Making SEO even more complicated is that there’s a relatively clear marker between general/global SEO and local SEO.

If you’re a small business based in a particular community, or if you are a service-oriented company with a specific service area, your business probably thrives on local customers.

That means you want to build your visibility not just to the general public, but to people who are actually near you. Improving local SEO will help you reach customers in your communities and convert their curiosity into real sales.

Plus, if you neglect your local SEO, you’re missing out on the 80% of consumers who use local searches to find businesses, or the 50% of consumers who perform a local search and visit a business within the same day.

Below are the 3 most important practices to improve your local SEO.

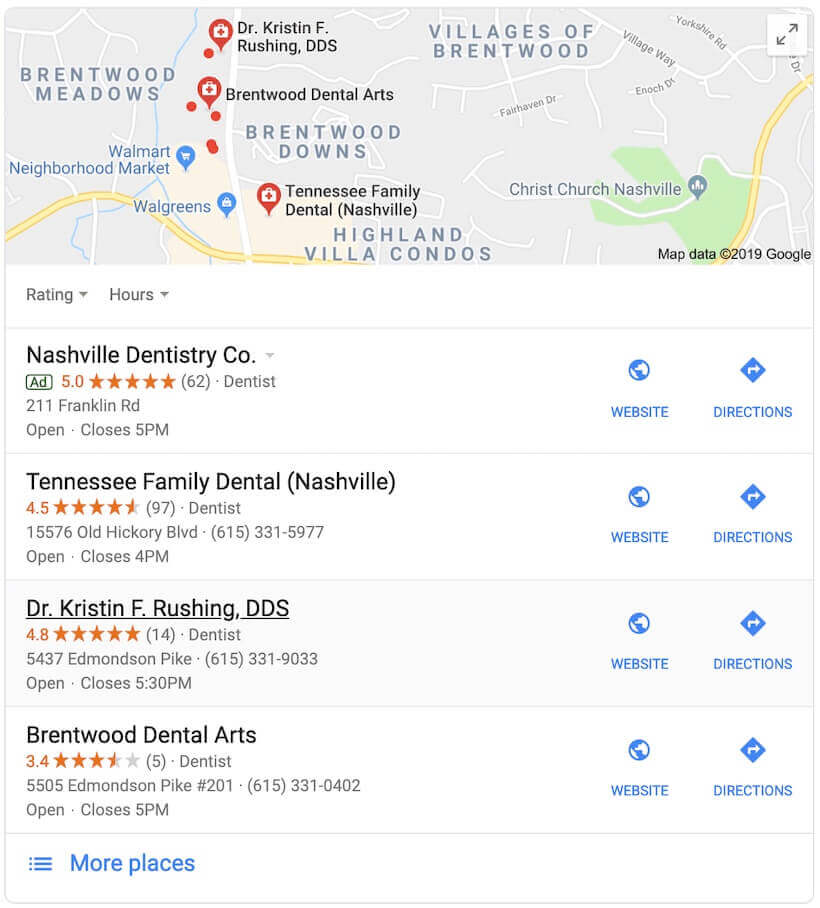

1. Google My Business

Google My Business is pretty much a non-negotiable if you want to boost your local SERP rankings. GMB pulls a variety of information together into some of the most valuable search spots.

Not only will Google be more likely to recommend you for relevant search terms with local intent, but you can also get prime real estate in the local map pack, a list of 3 or 4 suggestions that are given to the user.

In your GMB listing, you can list a variety of important business information, such as:

- Name

- Address

- Phone number

- Operating hours

You’ll also be able to post promotions, offers, or announcements via your profile, or answer real customer questions about your business.

2. Business Listings

Besides Google My Business, there are many other directories that you need to have a presence on. There isn’t a definitive list of the essential business directories since it largely depends on your niche, but a quick search of “[your industry] business directory” should yield all the results you need to get started.

Add or claim your listing on as many relevant business directories as possible. Ensure that your information on those listings exactly match your information on your GMB profile. Fill out as much information as you can, and keep it updated regularly.

3. Reviews

Surprisingly, 84% of people trust online reviews just as much as they trust a personal recommendation. Encourage your customers to leave you reviews, and you could see interest in your business soar.

Having reviews makes you look more authentic. It lets people know what kind of experience they can expect and helps them make better decisions about your brand.

Reviews aren’t only helpful when they’re positive. In fact, how you respond to criticism can be better PR than all of the positive reviews in the world. It gives you a great opportunity to respond to negative reviews and demonstrate a willingness to listen to your customers’ needs—and turn critics into satisfied customers.

For insane insights into local SEO and a host of other advanced SEO techniques, I wrote up a mega guide to help others learn SEO from experts. I’ve linked to it in the article already, but I wanted to make sure you give it a read since it truly contains some of the best information on the web for advanced and intermediate SEO.

Conclusion

There have been a lot of changes in SEO over the past year alone, and we’re sure that 2021 has even more in store. However, there are pillars of SEO that remain as strong and significant as ever, such as backlinking, website speed, and quality content.

Advanced SEO might feel complicated, but it really all boils down to how much value Google thinks you provide to your users. Be creative, come up with unique approaches to problems, implement industry best practices, and use the right techniques to improve your SERP ranking this year.

Analyze over 20 different technical SEO issues and create to-do lists for your team while sending error reports to your client.