When Rank Brain was announced to the world in late 2015, it was confirmed to be one of the top 3 most important factors that determine page rank.

Andrey Lipattsev, a Search Quality Senior Strategist at Google confirmed in a Q&A that backlinks were also in the top position. As most of you can guess, content is the missing piece of the trifecta.

Why list 15 link building strategies?

Short answer — link building methodologies are often overused and the effectiveness of various methods diminishes as more and more SEOs hurl themselves in a drunken ecstasy as they dream of ranking glory.

Building links is a catch 22.

You need links to be seen as an authority. But you also can’t do things that Google deems “manipulative”. You can always outsource to a trusted link building company, which is nice, but it’s going to be more cost effective to develop a strategy with an SOP.

What’s a marketer to do when the client isn’t satisfied with “just build quality material and hope it organically gets picked up.”

While creating quality content is important, quality content alone can’t be expected to rank or get enough organic links when others have quality content + a strong backlink profile already.

In the end, ranking isn’t really about following every rule from a Google Webmaster Blog or from an SEO celebrities twitter suggestions.

Ranking should be about what works, and for those of you that are serious about SEO, I’m sure I’m just preaching to the choir.

As a side note: over use of many of these strategies or careless implementation of backlink campaigns can do more harm then good.

So here are my favorite 15 link building strategies that work.

And one final thought before you dive in – Keep in mind that the more popular the outreach strategy, the more difficult it can become to have successful outreach.

If you get 1,000 marketers asking you for a link exchange or for guest posts, would you be more likely or less likely to accept? I’ll tell you from personal experience that it takes more and more effort and a greater communication of value from link builders for me to accept any link building propositions.

So, let’s dig into some of the strategies.

1. Create microsites and apply external links

A microsite is a domain or subdomain that has a handful of pages that cluster together to function just well enough to pass link juice. I don’t recommend for you to use subdomains as microsites, but a separate domain should do just fine.

Generally, these sites are used for promotion of some campaign or for media publications of some kind, but its also a low effort way to create some backlinks.

2. Use a guest posting service

A guest posting service finds blog opportunities for you. Not all of these services will work so don’t just assume that you can pick any old service.

My personal recommendation is for DFY Links Guest Posts. Charles really knows what he’s doing and has been in the SEO game for a little over 7 years (which is a long time to do SEO).

Careful of Web 2.0 nonsense strategies that don’t do a good job building out their network. It’s recommended that you simply use services that link you up with bloggers in certain niches.

3. Buy out existing blogs

This is a Google-friendly way of link building (as in it’s not punishable by site banishment or whatever you want to call Google’s slaps to the wrist). If you do this wrong, your white hat can quickly appear to be black.

Stay in your niche.

I’ve seen companies like sitepoint mass redirect blog articles to WooRank and it seems to benefit them just fine. Make sure you do this page by page with care.

If you’d like to see an example of some page by page redirects that are nice and juicy, I mentioned sitepoint, so just follow the link to see a list of redirected articles. ***note*** This is only meant to illustrate how you can go about redirecting your posts.

When you redirect, make sure you’ve written an article within the niche so the redirect will seem natural. The goal is to make quality signals, not just shots in the dark.

This strategy is a highly effective way to build authority sites, rather than thin web 2.0 sites that Google usually slams.

4. Conduct an Expert Round-Up

The value of an expert roundup is in the credibility that is automatically infused by having an army of experts provide their own insights on a topic, while gathering automatic traffic from the expert’s audiences.

The most asked question people ask on round-up posts is, “how do you determine which influencers to contact and whether there are any criteria for the roundup post.”

So glad you asked. Yes, check their sites DA, how many followers they have on social media, and make sure they update their blogs regularly.

The goal is to tap into larger audiences while getting a link. This strategy is effective because it should give you multiple backlinks, original content, and traffic (which is in itself a ranking signal).

5. Use journalism publication sites.

The most common example of journalism publication sites is HARO, which is not news to most of you.

When I spoke with Ammon Johns about the difficulty I’ve had in gaining traction in Haro, he advised to leave it behind after bloggers and SEOs flooded the service 6 years ago. If you’re looking for a HARO alternative, I suggest SourceBottle.

If you’re following Ammon’s advice, I’d recommend actually cultivating real-world or direct social-media connections with reporters – not via tools, but via smarts.

This type of advice isn’t popular because it’s less cookie cutter. It’s the cookie cutter advice that often gains media attention, unfortunately.

6. Purchase or gain access to multiple sites in the same niche.

This advice is fairly self-explanatory. In a previous post on internal links, we’ve discussed the power of internal links stems from your ability to directly control the flow of link juice, and in a similar vein, controlling multiple sites gives you the ability to control how you pass authority.

Be careful using this strategy. An SEO can easily get caught cheating the system if you abuse this strategy.

When you do this with multiple sites, you’re essentially looking like a PBN, and most marketers worth their salt will tell you to avoid PBNs (as an aside – PBNs can still work, but its just incredibly difficult).

Raven Tools and TapClicks are both marketing sites that can pass external links to each other, but if it happens, I make sure its actually relevant.

I haven’t personally tested to see how far you can take this strategy, but its definitely helpful if you are an SEO in control of several sites in the same niche.

7. Ghost Guest Posting (Purchased Also Works)

We’re all familiar with guest posting. Raven Tools accepts guest posts all the time.

Normally, in guest posting, a site gains free content at the exchange of a backlink. If you want to get creative, you can pay a blogger to write a post on their own blog (similar to influencer marketing) and as a part of the agreement, ask for a link to your target page.

Ghost guest posting is basically paying a blogger to write whatever they want within a certain niche.

If the blogger doesn’t have the time then you can just write the article for the blogger and give it to them for free and see if they’d be interested in using it.

You’ll probably need to pay the blogger if this person is familiar with SEO and understands the real value proposition of this exchange.

But honestly, who is going to say “No, I won’t accept $100-200 for you to give me free content”, provided that the content is up to par with the blog’s quality standards and fits in their niche.

It’s also worth mentioning that some claim that more page value is created when you avoid going the guest post route for your blog, but I can’t vouch for this information.

If anyone wants to leave a comment and provide some real data, it would be much appreciated.

8. Provide Graphics and Visuals to Webmasters and Bloggers

I feel like this strategy specifically is at risk of losing its potency due to the market being flooded with image distribution.

The premise of this strategy is that images boost the value of a blog post, so if you can add value to an existing piece of content on someone’s blog, then they will reciprocate by providing you a link.

The recipient is not forced to give you a link. You aren’t extorting the webmaster. The guiding principle is that you are putting your trust in Karma. Put that good energy out their and supply value to the community.

Kevin Costner said it best – If you build it, links will come.

9. Use Scrapebox and Search Operators to find Opportunities

When you’re building links, most companies (at least 6 major SaaS companies I know) outsource the project to the Philippines or to India.

If you’ve done any link building post penguin update, then you’ll know that backlinks opportunities are a little bit more difficult to find, or at least involve more care in implementation.

If you outsource your link building then you know that its difficult to trace the value of the majority of many of these outsourced activities, and as a manager, it’s incredibly helpful to be able to provide a clear methodology for your team to follow.

This is where scrapebox and search operators come in. The idea behind scrape box metrics checker is that you can check under the hood of literally any metric that determines a valuable link opportunity.

The tool even allows you to add some custom code to surface any details you may want.

It costs $47, but hey, in a world of non-stop subscriptions, it’s nice to know that a one-time payment gets you access to the tool for life.

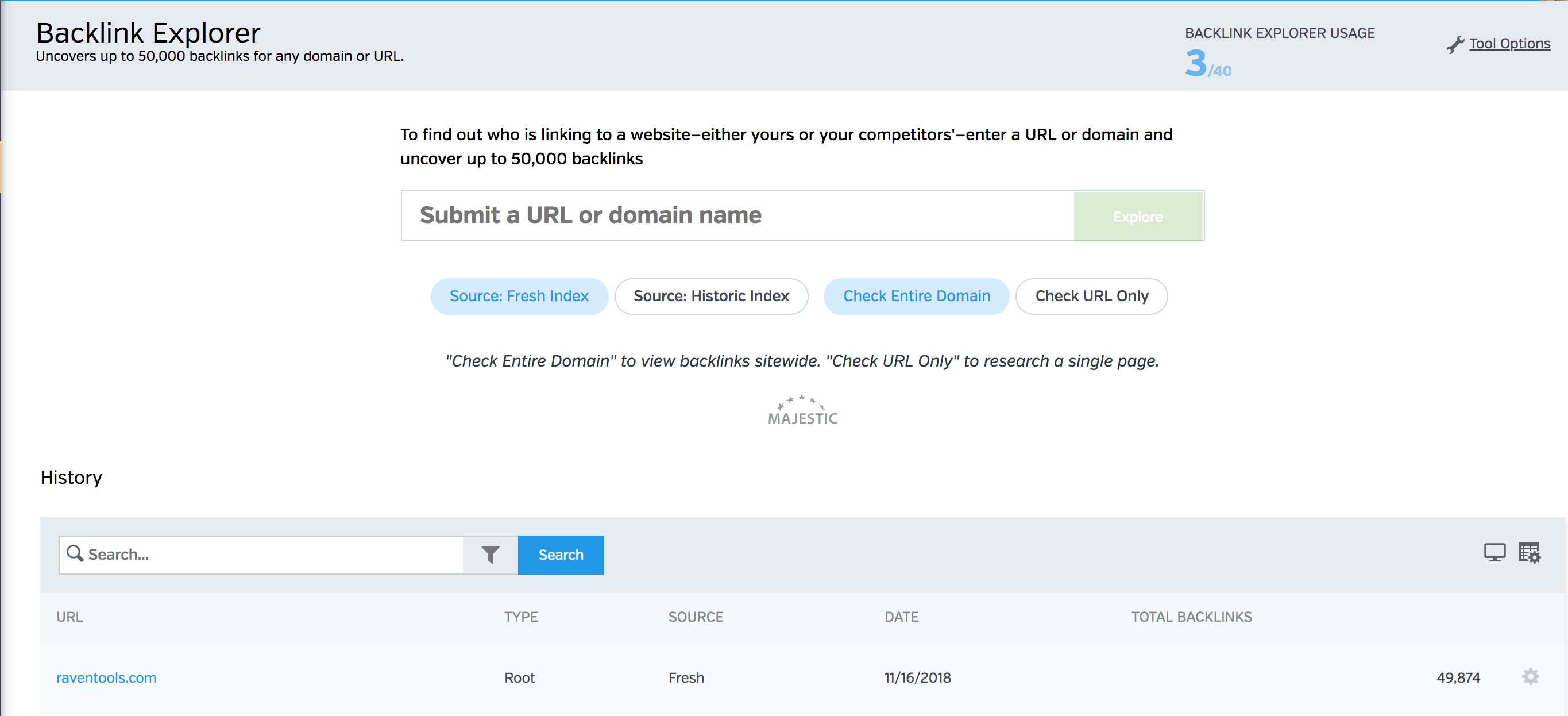

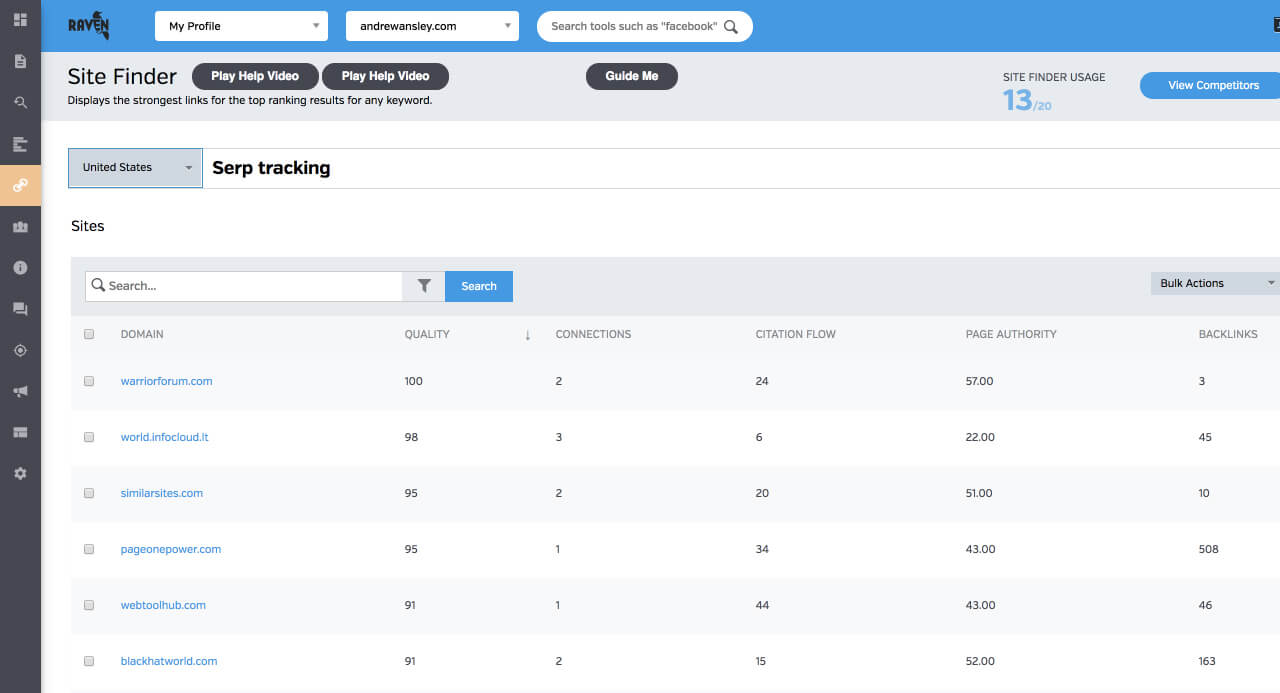

If you’re looking for a little more power, you can also use Raven’s own backlink explorer and domain research tool to evaluate backlinks and domains on a variety of important metrics.

Link Building Email Formula

Next step? I recommend using campaign monitor, active campaign, or mailshake for reaching out to link prospects.

Autocorrect tried valiantly to change mailshake to milkshake, but after 15 seconds I have finally convinced the computer that I did indeed mean “mailshake” and not “milkshake.”

But I digress.

As I was saying, you can use whatever software you want for your mail solution. Just find one that works on your budget since you’ll be sending thousands of emails.

I’m sure you’re thinking, “well, that’s great, but how do I go about pitching these people?”

The spirit of the message is as follows.

Tell them something along the lines of “I was doing some backlink research for an article of mine and I ran across your site. I’m a firm believer in providing value to someone first before asking for anything so I’ve attached an image/graphic that my designer created for your (insert URL) post.

The image has no strings attached, feel free to use it however you please. We do think that our article (insert URL) provides readers with excellent information on ____ and we’d love it if you gave it a look to see if it was worth linking to. Cheers!”

You can get more witty and humorous and less cut and dry if that strategy works for you. I’m not going to include the secret sauce, so you’ll need to develop that yourself.

Example pitch I made up in 20 seconds after jet lag:

“Aloha. Greetings from my digital island. I couldn’t help but notice that you had some great coconut trees, and I think my digital island also has some nice coconuts. I’ve grown a bit tired of my own coconuts, and I’m in need of external coconuts. I would love to strike up a coconut exchange of some kind.”

Obviously, this is a dumb example, but the idea is to just be less cut and dry at times.

Whatever you do, remember that you’re pitching to people that are basically professional pitchers and you either need to make them laugh or you need to be the epitome of professionalism.

My personal preference is always the laughter route.

10. Steal Rich Snippet Image Links

Diggity Marketing conducted a snippet experiment which supposedly had a lot of success, including stealing rich snippet images with the following techniques;

- Optimized Alt Text – You need to have a full match or partial keyword match for what you want to rank for. Semantic matches don’t seem to work as well from our experiments.

- Site Authority – You can’t just steal the image from an authoritative giant. You need to punch in your weight class or a little below. Target snippets where you are equally authoritative or more authoritative.

- Topical Content relevance – For the longest time, I had a competitor’s image on my featured snippet “How to make a marketing dashboard.” Frustrating. One thing you’ll notice, is that the image had optimized alt. text, which made me wonder, did the image uploader for this particular post optimize alt. text?NOPE. Optimize the alt. text and boom bam, I control the entire snippet. Key Lesson: You can only get images on snippets if the image actually applies to the snippet.

- Follow the Diggity example:

11. Social Embed Stacking

This advice comes straight from this very very long (15,000+ word) backlink article from mebsites.

We have been running a lot of experiments with posting links, video, maps, my maps content to social media platforms then embedding it in web2.0, websites, pages, PBNs to create relevance and crosslinking. We are happy to report this seems to be quite beneficial.

We find that it helps: web2.0 to get indexed and stay indexed more, improves relevance to the onpage data even when its just nonsense with only signals, drives some cross traffic, Google plus is instant death for anything shared, Twitter is best and Facebook is a distant second.

Be warned, web 2.0 is always playing with fire, so be careful as you experiment with this.

12. Target powerful sources for both internal links and external links

Internal links pass juice to other articles, but you can get some potent juju if you are linking to case studies. Case studies are data-heavy, and I don’t know if you all are aware, but the real type of content that reigns supreme, is data, specifically; Case studies.

They demonstrate how claims are connected to real results, and this is very powerful. Create your own case studies and use that internal link magic to get some good authority boosts and target case studies as external link opportunities.

13. Access your warmest leads

Raven’s parent company, TapClicks, is a perfect example of a large pool of warm leads. TapClicks is a massive omnichannel SaaS company that has around 200 partners ranging from Mailchimp to Marketo, Callrail, Adroll, salesforce, and Moz.

Suppliers provide great links from their blog. As a software company, Raven has access to quite the pool of warm leads if we decide to go on a link building campaign.

Just keep in mind that you can access suppliers and you can access others you do business with to work together.

14. Write content and send it away for free

I know this sounds crazy, but sit here and think about it. You can have a content writer draft up some passable content for .05 a word and send a decent blog article to different blog writers offering to let them have it for free long as they retain your link. I get asked to do this all the time and depending on the particular state of my content, I accept these kinds of offers.

The better the content, the more likely someone will actually use it, so you should figure out the amount of money you want to spend creating this content.

15. Get a backlink with Youtube

Youtube is one of the largest content hubs on the internet, and it functions almost like a google 2.0 search engine. Youtube videos provide two primary benefits.

The first benefit is that you can get more dwell time on a page. Any time you can keep someone on your site, you’re doing something right. The 2nd benefit comes from the link passing juice. Link to your blog from the youtube video.

(Side note, you should be incorporating youtube videos in your content as it has been confirmed that a youtube video embed acts as some sort of ranking weighted ranking factor.)

How to rank SEO Youtube videos? – (to pass link juice and to get more visibility)

Step 1. Do your Keyword Research first! It’s going to be pointless if you try to rank for a keyword with low search volume or intent!

Step 2. The Youtube Suggest Feature is GREAT for long tail keyword suggestions. This tells you what people are ACTIVELY searching for at the moment. If I type in: Lose Weight, it gives me a ton of ideal Keywords To Target, such as: Lose Weight Fast, Lose Weight in 1 Week, Lose Weight While you Sleep, Lose Weight Without Exercise, etc (see below image for example)

Step 3. Check out your competitors ranking for the keyword(s) you’re interested in. See what keyword(s) they’re optimizing their videos around. While YT doesn’t show tags anymore, it’s easy to hit “control U” and check out their “keyword tags” they’re optimizing the Video for. You can use the VidIQ Chrome Extension to view these even easier!

Step 4. Include your Keyword in your Title Once or Twice, but be sure to include a call to action, that’s the first thing people are going to see and you want to entice them to click your video over the others.

Step 5. Include your URL FIRST in the YT Description, Followed by 500+ words of UNIQUE Content, and the URL again. You probably notice that a lot of YT spammers add a ton of spam content, keywords etc into their content – it’s because content matters.

Step 6. Add 5 keywords ONLY in the Keywords portion of the video. This is the magic #, and the person who shared this with me would kill me if she knew I shared it online. 🙂

Step 7. Create a custom thumbnail for the video. This doesn’t help rankings, per-say, but it increases Click through Rate of the video. I use Canva to create my custom graphics, takes 5 minutes and generally doesn’t cost me anything.

Step 8. PROMOTE The Video on your social media channels, and send it to your email list (if you have one) 2 times with different but relevant subject lines.

Step 9. If you have any related videos, add links to them WITHIN the video descriptions, or add links to new videos you upload to the channel.

Big thanks to Youtube SEO Guru, Jeff Lenney for providing me with these steps.

Wrapping Up

Backlinks can move the needle, but the real skill is identifying how to best implement the tried and true strategies.

Be careful listening to every “expert” on what works and what doesn’t, because I guarantee you that many of the supposed link building strategies that “shouldn’t work”, still work, but require a more…nuanced approach and more expertise in the implementation of the strategy.

As for the backlink prospecting process, I’ve mentioned some ways of identifying opportunity, but I should probably mention that Raven has its own competitor backlink analysis tool, and it’s own backlink exploring tool.

and check by keyword

Leave a comment and tell us what’s worked for you!

Learn About Our Backlink Tools

Backlink Explorer lets you see who is linking to any website – either yours or your competitors.